VAM Nauseum: Bleeding the Patient

In recent days, and even going back months (years?), I’ve been pestering John Fensterwald about certain details in the overall media coverage of teacher evaluation and value-added measures. Let me say up front that I know John personally from numerous encounters at various events, and I have great respect for the work he does on his blog, Thoughts on Public Education. I have faith in his objectivity and his sincere desire to understand the complete story on any issue, and even when I disagree with him, I appreciate what he brings to the discussion. That said, I have some comments below that might be seen as rather blunt, but I feel confident that they are taken as intended, as a friendly disagreement among people of mutual good will.

In recent days, and even going back months (years?), I’ve been pestering John Fensterwald about certain details in the overall media coverage of teacher evaluation and value-added measures. Let me say up front that I know John personally from numerous encounters at various events, and I have great respect for the work he does on his blog, Thoughts on Public Education. I have faith in his objectivity and his sincere desire to understand the complete story on any issue, and even when I disagree with him, I appreciate what he brings to the discussion. That said, I have some comments below that might be seen as rather blunt, but I feel confident that they are taken as intended, as a friendly disagreement among people of mutual good will.

On June 1, John wrote “Experiments in Evaluating Teachers” to provide some interesting developments on the issue of teacher evaluations in California. This topic is of great interest to me as a co-author of the ACT report on teacher evaluation (see Publications, above), as a member of a union negotiating team working on teacher evaluation, and as a teacher and parent in California public schools. I would recommend John’s post in its entirety, but I took exception to this part in particular: “At the same time, test results are an objective measure and can be a good diagnostic tool, telling a teacher which types of students are learning the fastest, and which teachers in a school are having the most success.” I left the following comment on John’s blog post:

I don’t think we all agree the tests are objective – their biases simply originate further away from the classroom. Can you back up the claim that the tests have diagnostic value? Can you specify what you mean by “success”? Because I think what you mean is that the tests show which teacher’s students do best on the tests, and I do not accept test results as an term equivalent to “success”.

Furthermore, I have mentioned several times to you offline, and on this blog, and on my blog, that the position of APA, AERA, and NCME is that tests validated for one purpose should not be held valid for other purposes, especially where high-stakes decisions come into play. No one ever seems to have an answer for that – they just go ahead and do it. When will you, or any reporter, ask TAP, or LAUSD, or even NEA how they get around these words of caution from the leading professional bodies in educational research and measurement?

(Just to clarify, NEA as a whole hasn’t adopted that recommendation, and the language of the recommendation seems to describe tests that haven’t been developed yet – perhaps looking ahead to the supposedly improved instruments coming with the Common Core adoption).

I’m all for more substantive, ongoing rigorous teacher evaluations, and would recommend anyone interested in teacher perspectives on improved evaluations should also look at a report I helped produce:

https://accomplishedcaliforniateachers.wordpress.com/act-publications/

John wrote back to me as follows:

David: Not all of the recent evaluations on value-added metrics pan it. This from an April report of the Brown Center on Education at the Brookings Institution. Susanna Loeb of Stanford was a contributor. “We have previously issued a report that describes some of the imperfections in value-added measures while documenting that: a) they provide one of the best presently available signals of the future ability of teachers to raise student test scores; b) the technical issues surrounding the use of value-added measures arise in one form or another with respect to any evaluation of complex human behavior; and c) value-added measures are on par with performance measures in other fields in terms of their predictive validity.” In other words, there’s subjectivity in every form of evaluation, including classroom and peer evaluations. So test scores, whether CSTs or locally developed assessments, should be one of several measures. My gut says counting test results as 30 percent of an evaluation, which LA is proposing and the Obama administration has pushed through Race to the Top, is too high. I look forward to the new assessments under Common Core, probing deeper levels of learning, as an improvement to the CSTs. I sense you want to do away with the CSTs altogether. I strongly disagree.

As for the using CSTs as a diagnostic tool, I refer to a presentation by LAUSD at the Ed Trust-West. The district hopes to use value-added results, now known as Academic Growth over Time to answer these questions:

*Are students in a particular region, school, or grade level growing faster than students just like them throughout the District?

*Are specific groups of students in particular schools or classrooms growing faster or slower than the district average?

*And with further observation, what instructional methods, programs and interventions are working to improve student outcomes?

*What is the distribution of effective educators? Do we have the most effective educators working in the right places to achieve our goals?

*What can we learn from places where we are achieving remarkable results?

This is not a punitive approach. The results can inform teachers and the district.

What John has offered above boils down to three ideas I’ll agree with for the sake of argument:

- Test scores generated by a teacher’s students may be reasonable predictors of future students’ test scores.

- Other evaluation methods have similar weaknesses.

- Districts are using test scores for various purposes including teacher evaluation.

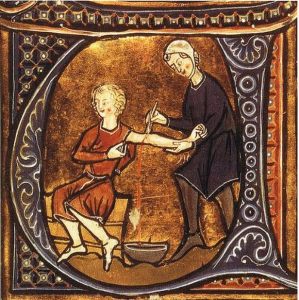

But still, as much as I respect John, I think he’s spinning his wheels here. First of all, the fact that LAUSD and other districts are going ahead with these plans is not evidence that the plans are well-designed, grounded in research, or able to produce results they can use in a valid way for their purposes. The bleeding of patients used to be widely practiced “medicine” – carried out by people who all agreed it was an effective treatment, but that didn’t make bleeding an effective treatment. I reiterate, no one seems to be addressing the NCME/APA/AERA policy on test validation; tests validated for student assessment are not necessarily valid for teacher evaluation. It may be true that researchers are finding ways to predict future test scores based on test scores, but that is not the same thing as proving the teacher is responsible for the test scores. I direct skeptics to the “false positives” found in Jesse Rothstein’s 2010 study of value-added measures. It turns out the VAM data can also be used to show that fifth-grade teachers affect fourth-grade test scores. Since we know that’s impossible, the data are showing us that there are unaccounted for causes creating that effect.

In a prior blog post, I cited study after study arguing for all sorts of school variables affecting test scores. Those potential impacts on test scores can only be ignored for VAM-based teacher evaluation if you can prove that the effects are the same for all teachers at a school. Good luck with that. Those of us who work in schools can tell you how much variability there is from room to room and from year to year, despite the fact that you’re looking at whole bunch of classes labeled “Grade 5” or “English 10.”

But there’s a deeper philosophical problem here, and it’s once again revealed in the language used in some of the research reports and political/bureaucratic documents. Look at some of the examples from above (with the caveat that I’m reacting to this information as presented and without having viewed the originals):

- The Brookings reports talks about “performance measures” – they mean test scores, and test scores alone.

- The LAUSD document asks about “growing” – they mean growing test scores, and test scores alone.

- LAUSD wants to compare “student outcomes” – they mean student test scores, and test scores alone.

- The same document refers to “effective educators” – they mean effective at raising test scores, and test scores alone.

- LAUSD refers to “remarkable results” – they mean remarkable test scores, and test scores alone.

It would be difficult to overstate the philosophical objections I have to this approach to teacher evaluation, but my philosophy is firmly grounded in teaching realities that I think are misunderstood or overlooked by the other side in this debate. As I’ve written before, those who espouse the importance of standardized test scores reveal a fundamental misperception of my job, my students, and my school.

John suggested I might “want to do away with the CSTs altogether,” but I wouldn’t go that far. I accept that a school district could use California Standards Tests and other measures in a useful way to observe significant trends in schools in the district, or among certain groups of students; that’s what the tests are designed to do, and what I assume to be their valid use per NCME/APA/AERA guidelines. However, in their zeal to improve teacher evaluation, too many districts and reformers are trying to double-down on the same limited tools they’re already over-invested in. When they do so, they risk creating an inferior evaluation system. John called attention to the portion of the Brookings report that says other types of evaluation have their flaws too. Let’s consider, for example, lesson observations as an evaluation tool. Their great advantage over test scores is that they can be tailored to examine every facet of teacher practice, they can be repeated, they can be recorded and analyzed – all of which will engage participants in meaningful examination of professional practices.

In the book Instruction That Measures Up: Successful Teaching in the Age of Accountability, W. James Popham offers up this summary based on his decades of work in the field: “Because of the inherent particularism enveloping a teacher’s endeavors, I believe the evaluation of teaching must fundamentally rest on the professional judgment of well-trained colleagues” (146). In an op-ed published last year (“Test Scores and Teacher Competency”), Popham wrote that tests for teacher evaluation are only appropriate if the teachers know what will be tested, and the tests measure what the teacher taught. “If either of these two requirements has not been satisfied, then the use of students’ test scores to evaluate teachers is unwarranted. Regrettably, at the moment, in almost all of our 50 states, neither of these requisite conditions has been satisfied” (emphasis added).

Can anyone out there rebut these experts head on, and overcome these obstacles to VAM-based teacher evaluation? So far, the answer is no. They fall back on what is statistically significant with current tests, though they can’t win the debate on what is educationally or intellectually significant about the tests. They say the tests will only be one of multiple measures, ignoring the long track record of failure when we assign any high stakes to such inadequate measures. They point to the fact that the practice is already in place, as if the bleeding of those patients should be acceptable because someone else has accepted it. Rather, I take exception to every one of those claims, and repeat, ad nauseum: you’re wrong. You’re very, very wrong. Stop the bleeding.

Trackbacks

- The Best Resources For Learning About The “Value-Added” Approach Towards Teacher Evaluation | Larry Ferlazzo's Websites of the Day...

- Today’s “Round-Up” Of Good School Reform Posts & Articles | Larry Ferlazzo's Websites of the Day...

- Duncan Seeks Cheap Conversions « InterACT

- Fox Business and Terry Moe Distort Education Issues to Slam Unions « InterACT

- Face to Face: Real Accountability « InterACT

- Which Path to Excellence in Education? « InterACT

- Turning the Tables: VAM on Trial « InterACT

- Big Apple’s Rotten Ratings « InterACT

- Advocate for the Right Tools in Teacher Evaluation « InterACT

- More Thoughts on LAUSD, Standards and Evaluation « InterACT

- More Thoughts on LAUSD, Standards and Evaluation « InterACT

- Deflating the Rhetoric in the Evaluation Debate « InterACT

- Letters to the Editor: San Francisco Chronicle « InterACT

- NEA and AFT Will Stand With Teachers Who Resist Tests (Sometimes) | DAVID B. COHEN

What you are proposing is also badly flawed: “Let’s consider, for example, lesson observations as an evaluation tool. Their great advantage over test scores is that they can be tailored to examine every facet of teacher practice, they can be repeated, they can be recorded and analyzed – all of which will engage participants in meaningful examination of professional practices.”

To use your analogy, this is like judging doctors based on their cupping procedure and whether it follows proper guidelines for bleeding, not whether or not the patients survive.

Thanks for making me clarify – I believe that student performance and student learning must be part of teacher evaluation. But not standardized test scores. Imagine teachers having weekly conversations about curriculum, lessons, instruction, and student performance on projects, tests, and all sorts of assessments. It’s working in many schools, but these growth and improvement and feedback systems have been separated too much from evaluation. For more on the use of authentic student performance in evaluating teaching, look at the National Board Certification program, or the ACT report on teacher evaluation.

Dear David

We approach teacher evaluation differently in the UK. It comes under Performance Management where a range of evidence is discussed and targets set.

On the question of teacher evaluation and test scores, I would say that test scores are not irrelevant in judging teacher performance.

The final examination results achieved by pupils in a teacher’s class, self-evidently, do provide a measure of the effectiveness of the learning experience for the pupils in that class. If they didn’t this would suggest that the examination process was incapable of awarding credit for what a pupil has learnt, and clearly they are designed to do just this.

If you have two classes taking the same examination then you can compare the effectiveness of the learning experience between these two groups. Of course, if there were differences we would wish to see what caused them with the aim of trying to improve those factors that contribute to learning effectiveness.

What we are comparing is the impact of ‘provision’ between two groups. If the groups were statistically equivalent then what this would do is take out the differences between the groups. If one class was made up of pupils with high prior attainment and one made up of low prior attainment, then we don’t have a constant in the equation and therefore could not begin to compare the quality of teaching between the two groups.

If we have two very similar groups of learners, what we can now do is compare the quality of experience of each group as reflected by the examination results of each group. This would provide evidence of the difference in ‘provision’ experienced by each group. Provision is what a school provides, of which teaching is the most important factor. But there are other factors too, for example the school climate, procedures and policies, resources etc. But if these factors are similar for each group then what we are no doing is looking at differences in the impact of teaching.

In the UK we would do this with the intention of supporting effective teaching and sharing the effective techniques used by the most effective teachers, with teachers who might benefit from more support, e.g. newly qualified teachers. Teachers that did not improve as a result of support would follow a procedure that might eventually lead to their dismissal, but would essentially be about supporting their development.

The other thing that is important when evaluating teaching is to examine the teaching as seen in lessons and assess it by judging the lesson against a whole range of established criteria that have been accepted as description effective teaching and learning. There is an example of those criteria at this link: http://www.4matrix.org/ofsted I have written about some of these issues, particularly issues to do with teh use of performance data and school evaluation at http://www.mikebostock.wordpress.com

We can see effective teaching with groups of pupils with learning difficulties. These might never achieve high test scores. The important thing about teacher evaluation is to be intelligent about it. The relationship between teaching and learning is complex, and we must assess aspects of it using separate criteria and understand the disposition and prior attainment of the pupils that are taught.

If, as appears to have happened in the US, we see a system where teaching is deduced solely on the basis of pupils’ test scores with no other considerations, then it needs to be challenged by education experts who understand why this is simplistic and illogical. If teachers are loosing their jobs through such a flawed process then I would imagine they would stand to gain substantial compensation in the future when such practices are shown to be reckless, seriously flawed, and deeply damaging in their consequences.

Mike Bostock

I should add that the process for deciding a teacher’s capability is triggered by a series of lesson observations that would be judged as ‘inadequate’ using the standardised criteria for grading the quality of teaching. The procedure that followed would offer progressive help and support to that teacher over time with review periods. If it turned out that the teacher could not meet the standard the profession required then they would be declared incapable of teaching and would have to find a career more suited to their skills.

The UK Government has said that it intends to speed up the process, because it can drag on in some cases with damage being caused to the education of pupils. But it has thus far remained a process that is fair to the profession. The idea that a set of pupil scores would automatically lead to the dismissal of a teacher will seem to us in the UK as reminiscent of a situation that might fall from the pen of Franz Kafka or George Orwell.

Thank you for the detailed reply and an international perspective. What you describe sounds like a much more reasonable use of student data. The final exams are presumably aligned to the curriculum, and the students presumably are invested in the results. With the state tests so in vogue right now, neither of those statements is true, and that’s exactly why experts like James Popham have stated unequivocally that the use of state tests for teacher evaluation would be a bad idea. I also like the emphasis in your comment regarding using data as one possible indication that a teacher can improve, rather than jumping to the question of when/how to release the teacher. In fairness to the policies I criticize, and their advocates, I don’t think anyone is proposing that test scores alone will determine a teacher’s future – but I have grave concerns that the test scores will gain undue influence in the process and become a lever to force out some teachers who don’t deserve that fate.

However, in the approach that you describe, I still question how reliably anyone can determine that one group of students is sufficiently similar to another group when the sample sizes are at the level of a teacher’s student load, and when the data used for that determination is probably quite limited. I think many teachers could tell stories of how certain classes turn out to be outliers in ways that the available data would never capture. (I had one freshman class section like that this year – every student passed, most did quite well, not one major problem or concern out of the 29 of them. Whatever data they produced, if sorted according to teacher, would make me look highly effective this year and set me up to appear less effective next year, in all likelihood).

I also question how reliably we can predict the future based on past performance; we’re talking about complex sets of skills and knowledge, and probably significant variation from year to year in some subject areas. (For example, a student who mastered biology may struggle with physics). Even if I grant that in my subject area, English language arts, we’re likely to see more continuity in skills, there are still so many variables that affect student performance and are not related to “provision” – difficult (impossible?) to identify all, and then either control for them or find them irrelevant.

I’ll also take a look at the materials and writing on your site to see if I can sort some of this out further.